What is happening with Sigma Tau

I have not been actively working on Sigma Tau recently. Currently I am working on learning Vulkan, not because I need it for Sigma Tau, but because I want to learn it, although it might be useful for Sigma Tau. Believe it or not, I think Vulkan is simpler than OpenGL, despite its verbosity and extensiveness. OpenGL is less verbose but confusing, and not very helpful--The Vulkan API is very clean and clear.

I have gone back to 2D for Sigma Tau, rather than full 3D. I don't believe I ever mentioned that I was planning on 3D but I did share some screenshots which showed full 3D.

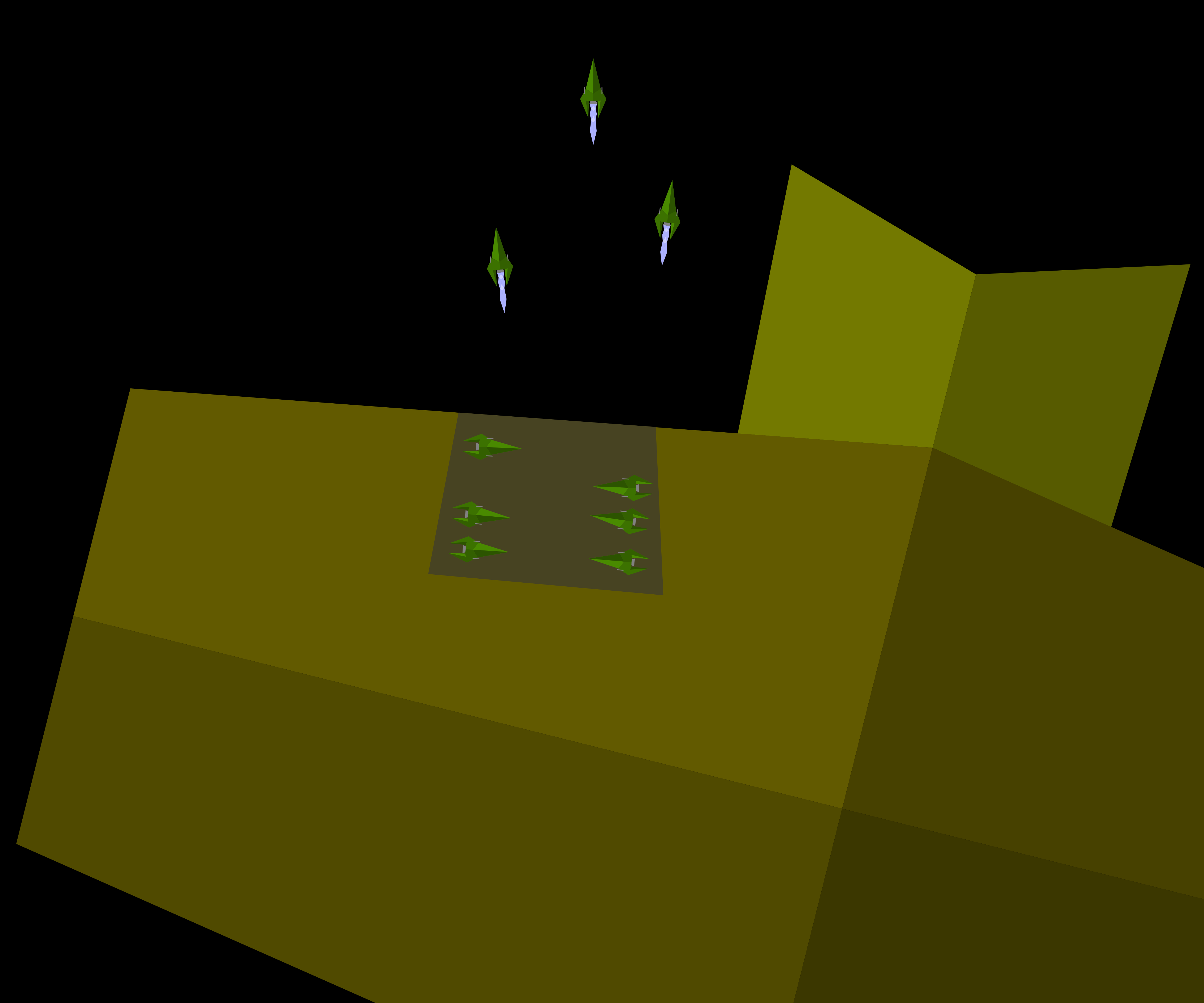

I made this mock up of what I am thinking, and shared it with my brother a while ago. I realized I should share it here. I for one think it looks pretty cool.

I am not looking for realistic graphics, I am more interested in consistency, and I am not a 3D modeler. I also quite appreciate vector graphics, and high-def graphics is not the key to suspension of disbelief.

The common radar-like views will be orthographic top-down views of the actual 3D space, with special handling for objects as they get to small (like for bullets or due to zooming) where they will be overlayed with indicators. Objects will be 3D, low-poly meshes with the largest slice always on the 0 plane, so that the orthographic, top-down view will show the 2D hit-box. Special handling will exist for docking bays (as seen in the picture--and yes docking will be done manually (think (2D) Elite Dangerous-ish).

What do you think of this? Any difficulties (particularly in code-ability) you might anticipate?

Comments

So, what are we looking at? A bunch of ships in a docking bay of a station and a few outside with engines firing? Most likely it is better to see in motion.

I don't see any issues with your method. 2D gameplay is a good way of doing things, it makes much easier and clearer. It also allows for better combat scenarios, in 3D there is so much space and directions that combat becomes a lot more complex, in understanding the space and in UI. Not to talk about AI.

Actually, AI is a thing, if you have these complex collision shapes, you need to think about how the AI will get around and dock with that.

On the vulkan part. I opted not to go for Vulkan for compatibility with quite a bit of hardware. I'm also not seeing that much difference with modern OpenGL, except for more control on certain bits&pieces. Binary bytecode input for shaders is nice, saves the hassle of different drivers not all accepting your shader code. But I see nothing else for me that helps me (I see a lot of nice things if you want multi-threaded rendering, but that's out of my league)

Yes, that big thing is a station, that dark area on it is a cross-section view of a docking port. Those ships outside are flying with engines firing.

Vulkan has WAY! better error reporting, for both the API (with validation layers) and shader code.

If I use Vulkan for Sigma Tau then it will be executed on the proxy server (regardless of whether a full blown ship-server or just a proxy). Unfortunately VulkanES is not a thing... The output would then be streamed to the HTML/JS clients; The Terminals are HTML/JS clients and are expected to connect over LAN or localhost. I do not need the proxy server to run on as wide a range of hardware. I don't know that streaming is really practical, especially with such simple graphics--come to think of it, probably, pretty impractical--I have not done any testing. Just learning Vulkan...

Hum, polygon-colliders would be more complicated for AI. Pathfinding would probably use simpler colliders (e.g. circles).

On that note, what algorithm is used for this kind of pathfinding anyway? A* is specifically for grid-type arrangements, not free space.

A* is specifically for grid-type arrangements, not free space.

That's not quite right, there's nothing in A* that requires a grid, for example, it can pathfind across a graph of arbitrary nodes with the heuristic cost between nodes encoded with the edges between nodes so long as you have a way to enumerate the edges leaving a given node. I use this for the automatic damage control robot in SNIS where it pathfinds its way across a network of nodes to navigate around in the engine compartment. So if you can construct a graph of waypoints and have a heuristic cost between waypoints, you can run A* on it.

Not to say there aren't particular implementations of A* that assume a grid, but that's down to the implementation, nothing in the algorithm requires it. Here's my C implementation that does not assume a grid: https://github.com/rustyrussell/ccan/tree/master/ccan/a_star

"Graph of arbitrary nodes with the heuristic cost between nodes", yes, well said, I could not figure out how to word that, so I abbreviated.

But, how do you path find in loose space, where no predefined points can exist because objects are dynamic? I guess if objects move unpredictably, a perfect algorithm is impractical. Probably an algorithm with determines ideal, near future path.

Thinking, out loud: Could you try a path strait to the target; if it overlaps with any close objects or any large objects (e.g. a planet) try the paths which go around it, using the shortest path (do this recursively for subsequent objects). Not sure how you would curve a path around an object? Also, gravity wells would really complicate things... I need to do some research.

Ah, yeah... so we are in violent agreement, ha. Well, I can tell you what I do (which is not entirely satisfactory... and my solution is 3D, so probably both more complicated than what you need and also less). For gross navigation, my NPC ships have a series of waypoints that form a route. They navigate towards the next waypoint in their route via something like this:

The NPCs have an "ai stack" with the most urgent goal at the top. If they get attacked, some evasion frame gets pushed on top of the stack, superseding their normal route navigation stack. It kind of works ok. TBH, human players have little enough insight into motivations of NPC movements that as long as whatever the NPCs do isn't insane, it's probably acceptable. My NPCs are able to drive from waypoint to waypoint, largely assuming that there are no obstacles between waypoints, with some special code to avoid planets, but not much more than that. I don't use A* for NPC ship navigation. Typically NPCs have routes set up to drive from this planet to that planet to that starbase to that other planet and back to the original planet, and repeat, or something along those lines.

Negotiating close quarters, (e.g. maneuvering within some complex large 3D space station containing an internal 3d maze, or convincingly docking at a rotating starbase, etc.) is beyond what I have so far been able to get my NPCs to do, either from lack of trying, or lack of knowing how, or lack of willingness to even try, depending on the specific problem.

Lol.

Basically the logical solution I was coming up will, though I was trying to fully solve the problem (including optimal routes through Mazes). Gravity really makes the problem a lot harder though...

You can make an dynamic graph for A*. But it will be complex. I have done quite abit of A*, even with multiple levels (a large range A* net for long rang nav, and short range tile based A* for details) this was on a static map, and used a lot of precomputed data.

EE uses the "draw line, avoid what we intersect, repeat until no intersection". Works well because space is very empty.

Details are a bit more complex to ensure not testing all objects for every line https://github.com/daid/EmptyEpsilon/blob/master/src/pathPlanner.cpp

So you, @daid, have done something similar to what I was thinking.

Ah, this is interesting: https://gamedev.stackexchange.com/a/132404

Basically use A* with every vertex of every shape--to optimize I would calculate the graph lazily (as needed) rather than trying to store, and update, the entire graph. Also, because ships have size, you would need to use points offset from the obstacle vertices.

I think that could be optimized and improved some more. Rather than a graph to every vertex, make a graph using merely both sides of every object.

Something like this:

This algorithm requires an optimized ray-cast function, where the starting and ending points can be adjacency to an mesh, rather than a fixed point.

Is that understandable? it is a rather abstract idea in my head, and it is fairly difficult to quantify into an explanation (: